Graphics Card Scoop: What’s the Deal with GPUs for Games, AI, and More?

Ever wonder what makes your favorite game look crazy real? Or powers those wild AI breakthroughs we keep hearing about? Not just your regular computer brain. We’re talking about the Graphics Card, also called a Graphics Processing Unit (GPU). And it’s not just some fancy extra for your Gaming PC; this thing is the engine. It seriously drives our whole digital world.

GPUs Are Super Important, Not Just for Games

Forget just making pixels look pretty! While every gamer knows a top-tier Graphics Card is key for the newest titles, GPUs have taken on a hella important mission way beyond rendering virtual worlds. Think digital transformation. On a global scale. We’re talking Artificial Intelligence. Machine Learning. Cryptography. Even the nitty-gritty of cybersecurity. By luck, these cards found their true calling. They’re essential tools for humanity now, especially with all this data.

How These Things Work: Parallel Power!

Got two artists? In a workshop?

One guy’s a master painter. Super talented. Can create a complex portrait or a sweeping landscape, blending colors perfectly. But here’s the catch: one canvas at a time. That’s it. That’s your CPU. It handles complex tasks. One by one.

And now, picture a whole team. Hundreds. All working on a massive mural. Each painter? Simple stuff. Maybe a straight line. Fill a blue section. Repeat a basic pattern. Alone, they’re not as good. But put them all together, working simultaneously? Boom. They can cover a colossal wall. In no time flat. That’s your GPU. It’s built for parallel processing. Chops big, tricky problems into thousands of smaller, simpler tasks. And attacks them all at once. Like a swarm.

GPU Power: From Tiny Zzz To Wild!

GPUs? They’re wild. Computationally. And we measure it by how many calculations it can pull off every single second. Perspective? Running a classic like 1996’s Mario 64? Your Graphics Card was crunching about 100 million operations in a second. And Minecraft in 2011? That jumped to a staggering 100 billion. Boom.

But today’s big games. Like Cyberpunk 2077. They demand something truly insane. We’re talking about 36 trillion calculations. Every second. That’s the muscle needed to render intricate 3D worlds made of billions of tiny triangles. Calculating light reflections, shadows, and keeping everything buttery smooth. At 60 frames per second. Trillions. Crazy jump.

Don’t Buy! Just Rent Your GPU Power in the Cloud!

Not everyone can just drop a ton of cash on a monster Graphics Card. And that’s where Cloud GPU shows up. Cloud computing? It lets individuals and companies rent huge GPU power. On demand.

Picture this: An architect in Laguna Beach. Chilling. Taps into serious power, way far away. Basic laptop. They’re hooked up to the type of processing oomph usually just for big studios. Running complex 3D design, animations, or visual effects software. Like it’s right there. On their own desk.

This isn’t just convenient; it’s a huge game-changer. Especially for AI companies. Need hundreds, even thousands of GPUs for training their massive models? Got it. Killer deal. High tech without the massive upfront cost.

VRAM: Fast Memory!

Another key part of your Graphics Card? Its own dedicated memory. VRAM. This stuff? Way faster than regular RAM your CPU uses. Imagine regular RAM shuffling data in single-file lines; VRAM is a superhighway. Multiple lanes. Zips data around. Crazy speeds.

Modern VRAM, like GDDR6 or GDDR7, can transfer nearly 2 terabytes of data per second. Regular RAM? 64 gigabytes. Because this insane data flow is super important for the GPU to grab all that information needed for killer high-res graphics, tricky simulations, and chunky AI models. No slowdowns. Ever.

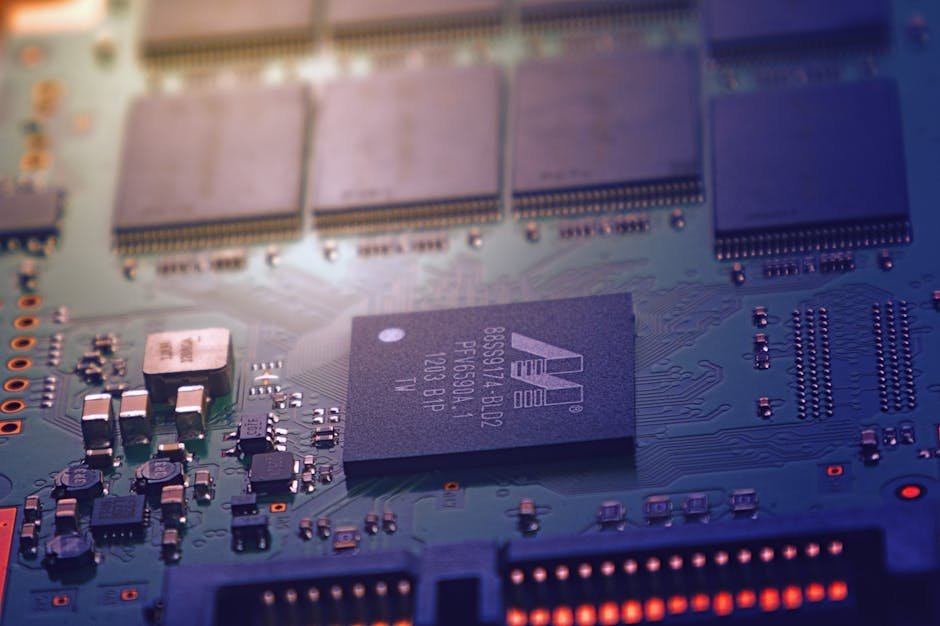

Manufacturer Tricks: How They Make So Many Different Cards

Ever notice how different Graphics Card models from the same generation look kinda related? Good reason. Manufacturers often start with one big, powerful chip design. But during making, tiny flaws happen. Instead of just chucking a whole expensive chip over a few bad spots? They just turn those bits off.

The result? Same core chip. Repurposed. A perfect chip? Top-tier card. Think an NVIDIA RTX 3090 Ti with all its cores active. But a chip with a few minor defects? Becomes a slightly less powerful, but still awesome, RTX 3080. Smart. Cuts waste. Gives us more choices.

The Cloud Loves GPUs. For Our Remote Future

Remote workers crunching big data. AI labs building neural networks. GPUs? Crucial. In the cloud. They give us scalable, on-demand processing capability. Changes how businesses run. Fuels groundbreaking research. And another thing: Because without cloud GPUs, lots of today’s big projects? Too expensive. For countless startups. Even big, established players.

Quick FAQs

Q: Why are GPUs quicker than CPUs for some stuff?

A: GPUs do parallel processing. Thousands of simple calculations. All at once. CPUs? More versatile for complex, sequential tasks. But only handle a limited number of calculations at a time.

Q: Can a Graphics Card do AI tasks without a super CPU?

A: A CPU is always the brain of the computer. Gotta have it. But a powerful GPU can take the heaviest AI processing off its plate. Way faster than a CPU on its own. The GPU’s just great for the math AI models need.

Q: What’s the best part about renting GPU power in the cloud?

A: Main benefits: save cash, super flexible. You get cutting-edge GPU technology. Huge processing power. Without buying and maintaining big hardware. No giant upfront cost. Pay for what you use. When you need it.